AI in Peer Review: The New “Reviewer 2” or Scholar’s Ally? (2026)

AI is not the new “Reviewer 2” in academia. While the traditional Reviewer 2 is stereotyped as irrationally harsh, AI currently functions as a technical assistant. Data from 2025 indicates AI-assisted reviews are actually more lenient (approx. 4.9% higher acceptance), yet lack the critical, ethical, and contextual nuance of human experts.

📑 Table of Contents

Executive Summary

The “Reviewer 2” trope has long haunted academic corridors, symbolizing the gatekeeper whose feedback is as unconstructive as it is biting. As we move into 2026, a new entity has entered the fray: Generative Artificial Intelligence.

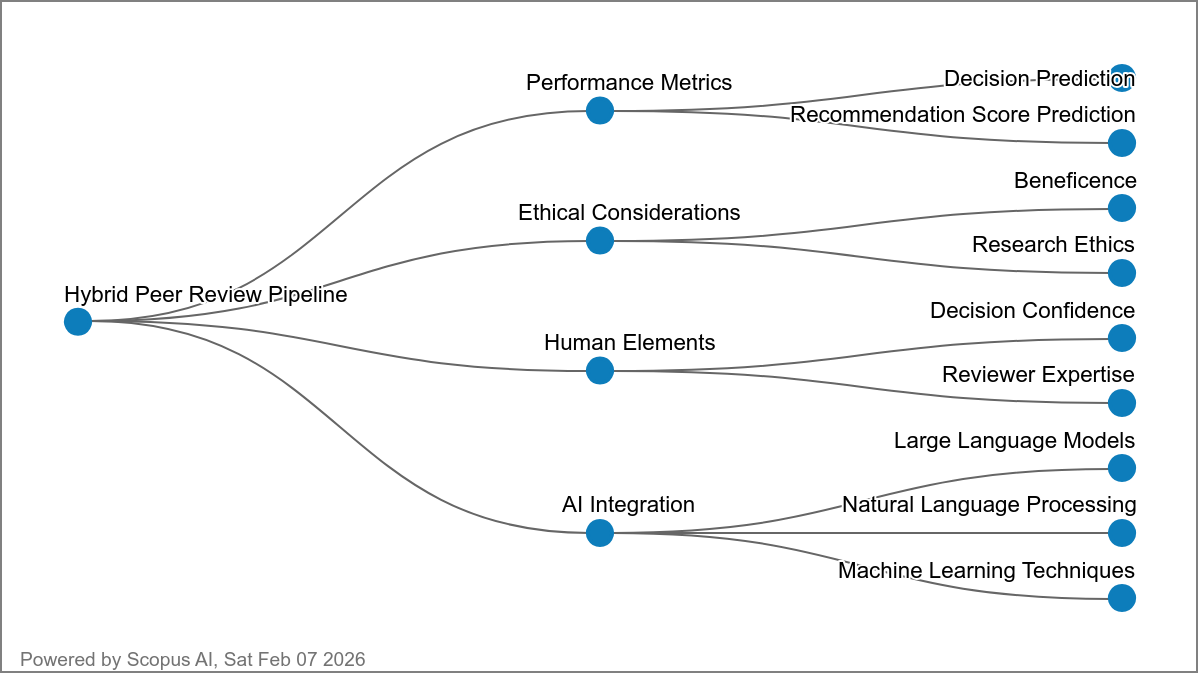

This article deconstructs the myth of Reviewer 2 using recent empirical data and evaluates whether AI tools are replicating these exclusionary patterns or offering a path toward more equitable publishing. By synthesizing findings from 2015–2025, we argue that the future of scholarship relies not on AI replacing humans, but on a hybrid “Human-in-the-loop” framework that balances algorithmic efficiency with scholarly wisdom.

Introduction

Peer review is the “gold standard” of academic integrity, yet it is currently under immense strain. The volume of submissions is skyrocketing, leading to what many call a “reviewer crisis.” In this high-pressure environment, the figure of Reviewer 2 has become a cultural shorthand for the systemic flaws of the process—bias, harshness, and lack of constructive engagement.

However, the rapid integration of Large Language Models (LLMs) and Explainable AI (XAI) into editorial workflows has shifted the conversation. Is AI becoming a digital version of the harsh Reviewer 2? Or is it a tool for radical transparency? This article examines the capabilities and limitations of AI in peer review, the reality of the Reviewer 2 stereotype, and why the “AI Review Lottery” is the new variable every researcher must understand.

The Reviewer 2 Phenomenon: Deconstructing the Myth

Before assessing AI, we must define the standard it is compared against. The “Reviewer 2” persona is widely believed to be uniquely negative. Yet, empirical evidence suggests this is more a product of cognitive bias than behavioral reality.

The Disconnect Between Anecdote and Data

Large-scale analyses, such as those by Worsham et al. (2022), demonstrate that Reviewer 2’s tone and length of feedback are not statistically different from Reviewer 1 or 3. The stereotype likely persists due to:

- Negativity Bias: Authors remember one harsh comment more vividly than three constructive ones.

- The Audience Effect: In open peer review, the perceived harshness often diminishes as accountability increases (Romero Felizardo et al., 2022).

- Epistemic Respect: Real issues in peer review center on “epistemic respect”—the fair evaluation of marginalized ideas—rather than the numerical position of the reviewer (Krlev & Spicer, 2023).

Systematic Barriers and Exclusion

While Reviewer 2 might not be “meaner,” the peer review system itself perpetuates barriers for historically excluded groups. Studies from 2023 show that non-Western scholars often face a “double translation” burden, where their ideas must fit Western-centric models to gain acceptance (Barros & Alcadipani, 2023).

AI in Peer Review: Current Capabilities (2024–2026)

Artificial Intelligence has moved beyond simple spell-checks. In 2026, AI’s role in the editorial office is tripartite: Technical Screening, Reviewer Matching, and Preliminary Quality Assessment.

1. Technical Screening and Integrity

AI tools excel at the “grunt work” that consumes human time:

- Plagiarism & Image Manipulation: Detecting sophisticated AI-generated fraud (Gralha & Pimentel, 2024).

- Format Compliance: Ensuring manuscripts meet journal-specific style guides.

- Statistical Checks: Verifying p-values and data consistency, though deep methodological critique remains a human domain (Spitzer, 2026).

2. The Efficiency of Reviewer Matching

AI-based matching systems have significantly reduced the “selection time” for editors. By using interdisciplinary topic detection models, journals can now find niche experts across global databases, potentially reducing the “old boys’ club” bias in reviewer selection (Farber, 2024; Xiao et al., 2023).

3. LLMs and Manuscript Quality Assessment

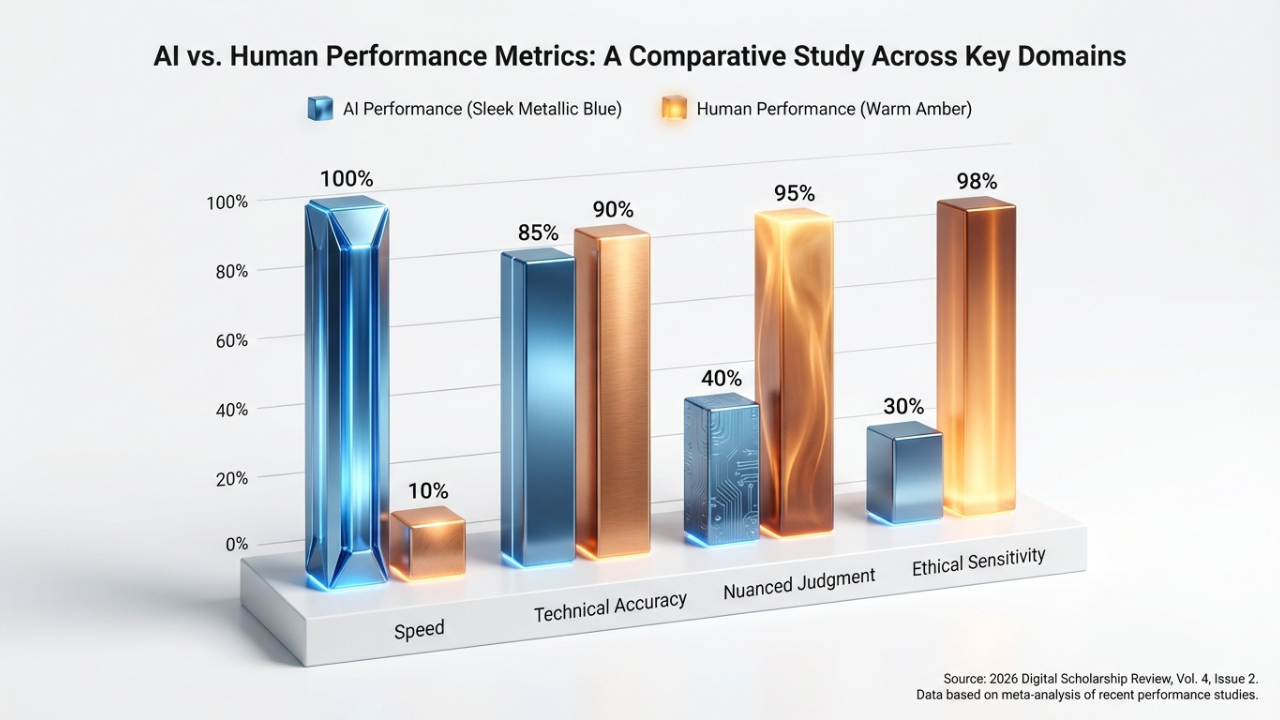

Recent trends show LLMs being used for initial screening of research significance. While these models offer high internal consistency, they often struggle with nuanced persuasiveness (AI cannot yet feel the “weight” of a revolutionary argument) and ethical dimensions (Doskaliuk et al., 2025).

The “AI Review Lottery”: A New Source of Variance

One of the most striking findings from the 2025 academic cycle is what researchers call the “AI Review Lottery.” Contrary to the “harsh Reviewer 2” trope, AI-assisted reviews are statistically more lenient.

According to Russo et al. (2025), papers reviewed with AI assistance see an acceptance rate increase of approximately 4.9 percentage points near the threshold. This suggests that while AI is efficient, it may be “too nice”—failing to catch subtle methodological flaws that a seasoned human expert would spot.

| Feature | Human Reviewer | AI Reviewer (Current State) |

|---|---|---|

| Tone | Variable (often perceived as harsh) | Consistently polite/neutral |

| Methodological Depth | High (detects subtle flaws) | Medium (detects statistical outliers) |

| Novelty Assessment | Subjective but insightful | Relies on historical data patterns |

| Consistency | Low (inter-rater reliability issues) | High (repeatable results) |

| Bias | Social/Cognitive biases | Algorithmic/Training data bias |

Ethical Stakes and the FAIR Framework

The integration of AI isn’t just a technical challenge; it’s a governance crisis. How do we ensure that AI doesn’t simply automate existing prejudices? To address these concerns, the FAIR Framework (Grünebaum et al., 2025) advocates for:

- Formal Transparency: Disclosing when AI is used.

- Accountability: Humans must sign off on all final decisions.

- Integrity: AI should not be allowed to “write” the final verdict.

- Reliability: Continuous auditing of AI models for bias against non-Western authors.

Critical Discussion: Information Gain for 2026

If AI is more lenient and Reviewer 2 isn’t actually harsher, why does the peer review process still feel so broken? The answer lies in Structural Load. The “Reviewer 2” meme is a symptom of a system that is over-leveraged. AI can alleviate the load (efficiency), but it cannot fix the fundamental problem of epistemic respect.

Original Synthesis: AI’s greatest value in 2026 is not as a “Reviewer,” but as a “Reviewer Assistant.” By handling the technical audit, AI frees up human experts to do what they do best: engage in the high-level, creative, and critical synthesis that defines scientific progress.

Frequently Asked Questions

Is AI currently replacing human peer reviewers?

No. While AI tools are used for initial screening and technical checks, no major high-impact journal has replaced human experts with AI. The consensus (Lee et al., 2025) is that human oversight is essential for maintaining research integrity.

Are AI-generated peer reviews allowed?

Most publishing policies (COPE guidelines) currently prohibit “ghost-writing” review comments with AI. Disclosure is mandatory, and AI cannot be listed as an author or a primary reviewer (Chou, 2025).

Does AI help or hurt researchers from non-English speaking backgrounds?

It is a double-edged sword. AI can help level the playing field by providing language editing support, but algorithmic bias in screening tools may still favor Western-centric research paradigms if not properly governed.

How can I tell if my reviewer used AI?

Current stylometry tools have high accuracy in distinguishing AI-generated text. Common signs include overly generic praise, a lack of specific references to figures/data within the text, and a highly repetitive sentence structure.

Conclusion & Future Outlook

AI is not “another Reviewer 2.” It lacks the persona, the bite, and—critically—the deep understanding required to be a true gatekeeper of knowledge. However, as the “AI Review Lottery” shows, its integration is already changing who gets published and why.

The future of academia lies in Dynamic Hybrid Systems. We must move away from the meme of the “harsh reviewer” and toward a system of “collaborative quality assurance.” By 2030, we expect blockchain-verified, AI-assisted, and human-led peer review to be the standard, ensuring that every manuscript is judged on its merit, not its origin.

References

- Worsham, C., et al. (2022). An Empirical Assessment of Reviewer 2. Inquiry. https://doi.org/10.1177/00469580221090393 ↗

- Doskaliuk, B., et al. (2025). Artificial Intelligence in Peer Review: Enhancing Efficiency While Preserving Integrity. Journal of Korean Medical Science. https://doi.org/10.3346/jkms.2025.40.e92 ↗

- Russo, G., et al. (2025). The AI Review Lottery: Widespread AI-Assisted Peer Reviews Boost Paper Scores and Acceptance Rates. Proceedings of the ACM on Human-Computer Interaction. https://doi.org/10.1145/3757667 ↗

- Grünebaum, A., et al. (2025). The FAIR framework: ethical hybrid peer review. Journal of Perinatal Medicine. https://doi.org/10.1515/jpm-2025-0285 ↗

- Krlev, G., & Spicer, A. (2023). Reining in Reviewer Two: How to Uphold Epistemic Respect in Academia. Journal of Management Studies. https://doi.org/10.1111/joms.12905 ↗

- Farber, S. (2024). Enhancing peer review efficiency: A mixed-methods analysis of AI-assisted reviewer selection. Learned Publishing. https://doi.org/10.1002/leap.1638 ↗

- Schilke, O., & Reimann, M. (2025). The transparency dilemma: How AI disclosure erodes trust. Organizational Behavior and Human Decision Processes. https://doi.org/10.1016/j.obhdp.2025.104405 ↗